Abstract

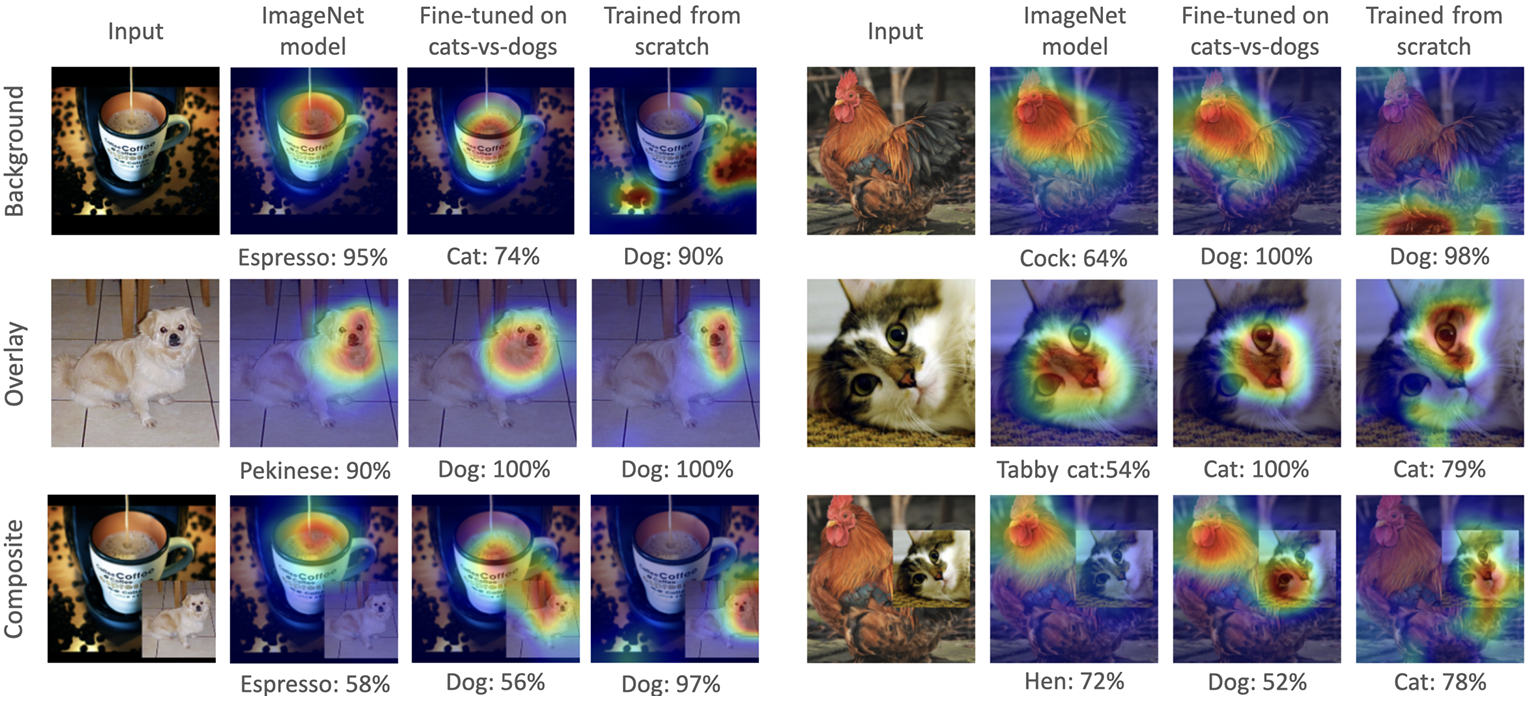

The source domain in transfer learning provides essential features that enable effective and data-efficient learning on the target task. Typically, the finetuning process does not explicitly account for how the knowledge about the source domain interacts with the target task. We demonstrate how that knowledge can interfere with the target task leading to negative transfer. Specifically, certain memories about the source domain can distract the finetuned model in certain inputs. We provide a method to analyze those memories in typical foundational models and to surface potential failure cases of those models. This analysis helps model developers explore remedies for those failure cases.

Citation

Amal

Alnouri,

Timothy J Wroge,

Bilal Alsallakh

Detrimental Memories in Transfer Learning

ICML 2024 Workshop on Theoretical Foundations of Foundation Models,

2024.

BibTeX

@article{,

title = {Detrimental Memories in Transfer Learning},

author = {Amal Alnouri and Timothy J Wroge and Bilal Alsallakh},

journal = {ICML 2024 Workshop on Theoretical Foundations of Foundation Models},

url = {https://openreview.net/forum?id=mALjVQZV8N},

year = {2024}

}